Crisis Discipline

Stewardship of Ourselves

Working behind the scenes in the news media sector, it has become increasingly clear to me that tech and the internet as they operate today are causing structural damage to our collective institutions that runs deeper than seems to be understood. But these changes can be hard to characterise. If you take advertising revenue from high-quality contexts and use it to subsidise conspiracy theories, it's pretty obvious that nothing good will happen — but we barely have an understanding of the data economy sufficient to put a critique of this transfer on solid footing. If you move editorial decisions about what information is made more prominent to people from tens of thousands of career editors working the world around with methods that are highly diversified in biases, culture, skill, or politics to a tiny number of algorithms that are everywhere uniform, what effect can you expect? Reputational and market-driven accountability will be removed, which is evidently bad, but the massive simplification of this ecosystem seems very likely to have deep-running consequences of its own — but how do you begin looking for those?

In many ways, I feel like the farmer pointing out that her livestock began dying in strange ways after that plant moved in next door or the fisherman noting that the fish don't come back for as long a season as they used to since those structural fishery reforms. Why pay attention to the folks in the trenches, who are clearly just afraid of change and innovation, when it's obvious that the nice engineers are working hard and so seriously about delivering better living?

With this in mind, I couldn't be more excited at the research project outlined in Stewardship of global collective behavior, recently published in PNAS by a cast of stellar workers across multiple disciplines. Read it if you haven't already. If you'd rather check out a summary first, Carl Bergstrom has a thread for you. It's only the very beginning of something, but I felt like it expertly and efficiently pointed at several of the questions that I have been drunkenly clutching at in the dark by stumbling haphazardly across disjoint swathes of scholarly literature.

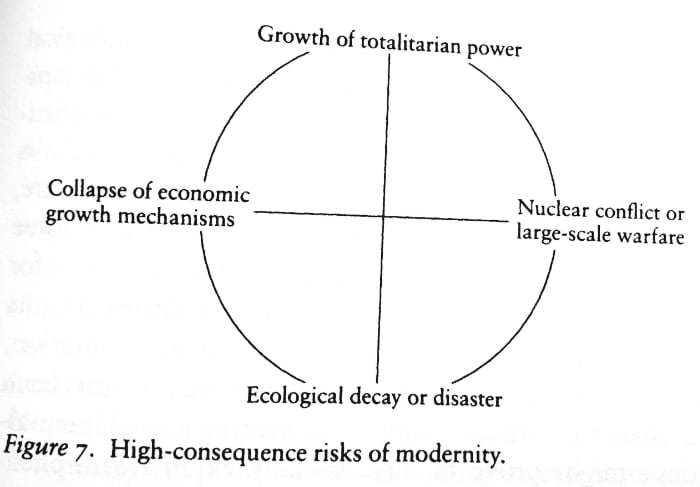

The paper's core idea is straightforward: complex systems can be highly resilient to perturbation, but that resilience is finite. Once such a system is pushed outside of its homeostatic regime, it undergoes sudden, catastrophic, and irreversible loss of functionality. The deployment of internet technology is evidently a massive perturbation of our social dynamics — but we lack the intellectual tooling to analyse its effects as they are taking place. "Expanding the scale of a collectively behaving system by eight orders of magnitude is certain to have functional consequences," the authors aptly tell us. Indeed. But "we have little insight into how the millions of seemingly minor algorithmic decisions that shape information flows every second might be altering our collective behavior." To address this, they are working to establish a "crisis discipline" around this nexus of issues, which would bring transdisciplinary academics, policymakers, and technologists together to understand and address the degradation of the complex system that is our social dynamics. I couldn't agree more: this paper was published just as I was drafting a W3C workshop on the need to bring diversified expertise together to figure out how to govern the Web, which is clearly in a bad place.

I have questions for this crisis discipline. I will sketch them out briefly here, and hope that I may return to them later.

The first (and perhaps foremost) of my concerns is the impact that the perturbation of our social dynamics may have on our collective cognitive abilities. In Cognitive Democracy, Henry Farrell and Cosma Shalizi make the (credible) case that democracy is intrinsically better at solving complex problems (of the kind that have rugged solution landscapes) than markets or hierarchies/bureaucracies. Their argument is simple:

- Democracies foster a diversity of viewpoints being shared broadly (with much higher bandwidth than the price mechanism can support). Hong & Page have shown that sometimes groups of diverse problem solvers outperform more uniform groups of high-ability solvers and offered a plausible model indicating that this may be common. This helps explore more of the solution landscape and share information about it.

- Democracies are better at promoting equality, which avoids issues in which one party can impose its solutions from excess of power even if they are less good. This helps ensure that the best solution is selected from the set that has been explored.

Evidently, real-world democracies do not implement either of these perfectly, but what we need is for them to get closer than alternatives, and to keep getting closer. Effective collective cognition at the level we require to face the emergencies of our time — chief amongst which the climate emergency — relies on our ability to maintain social dynamics that support these features. "Democratic structures must be shaped so that social interactions and cognitive function reinforce each other," say Farrell & Shalizi.

There is good reason to believe that the structural features that support collective cognition are being damaged. In Stewardship, Joe Bak-Coleman et al. flag the concern that so much is driven by algorithms that are profit-maximising more than anything else. I agree that that is plausibly an issue, but I am far more concerned with how uniform these algorithms are across huge populations. The underlying insight that explains why diverse groups are better at complex problems is that a diverse set of intellectual tools and viewpoints will be better at finding solutions on a rugged landscape. In mediating so much of humankind's discovery through the tiny funnel of a handful of systems, we are creating an unprecedented impoverishment of our intellectual toolbox. I am far less concerned about filter bubbles than I am about turning a complex, likely scale-free network of discovery into a fully-mediated hub-and-spokes structure in which everything flows through a system of very limited variety. It would be very surprising that this level of cognitive normalisation, replacing or mediating billions of idiosyncratic explorations, did not have a deleterious impact on collective cognition.

I also worry about the effect that unified identity may have on sorting, and that sorting has on our ability to communicate ideas broadly and to build societal consensus. In healthy social environments, identity is fragmented. I am not the same person at home, at work, at my local dive bar, in a tech policy meeting, or with different groups of friends — and neither are you. The ability to present differently in different contexts is key to who we are and, according to the looking-glass theory of self, is key to our ability to find and develop our social selves. Presenting differently according to context is also an important layer of protection for the less powerful. A huge chunk of our tech infrastructure — social media, SSOs, browsers — is working against the fragmentation of identity by enforcing a unified identity across completely unrelated contexts. Such a brutal shift in how we define and present ourselves is almost certain to have psychological and behavioural ramifications, though we don't know what they are.

I also hypothesise that the forced unification of identity drives sorting. Sorting is an ongoing social process that is driving social clusters to be increasingly homogenous and divided from one another. Initially, a given socio-economic group might exhibit a diversity of religions, politics, pass-times… In turn, a given religious community will have people from different socio-economic groups, politics, pass-times… and so on. When different groupings do not align, they enable people from different viewpoints to come into contact and communicate — a key component of Cognitive Democracy as described above. When knowing one of these variables about someone allows you to predict the others with a high degree of certainty, this means that the groupings align and cross-society communication (in the sense of exposure to diverse others) drops. That's sorting.

In a world of fragmented identities, the folks from my knitting club might often be radical anarchists that I may not want my coworkers at the bank to associate me with; conversely, my pals from my macramé reading club might overindex conservative but I don't care because all we talk about is macramé, and even in a world in which all artefacts are political, macramé tends to remain pretty open-minded. If, however, we bring all this merry crew into a world of unified identity and spill them out in a big, flat, structureless mosh pit of social media, then there will increasingly be people I cannot be seen with and people whose whole selves I can't stomach to see.

At the scales we are dealing with, this should plausibly lead to increased sorting. What's more, the structurelessness you get from smushing all these contexts together creates its own power hierarchies (see The Tyranny of Structurelessness). Both of these factors work against social dynamics that promote collective cognition.

A third concern I have (but have yet to prove in this context) is the effect of disempowerment on intrinsic motivations to cooperate. As Elinor Ostrom summarises in Understanding Institutional Diversity, interventions designed to make people more cooperative can instead durably deplete their motivation to cooperate, and this even when it is clear that cooperating would be in their interest. She notably describes an experiment in which several control groups are made to play variations on the prisoners dilemma, with results that largely align with the theory. At the same time, a treatment group is made to play the same game but in the presence of an enforcer who forces them to cooperate. Naturally, the treatment group, being forced to cooperate, achieves a better payout and can readily see that it achieves a better payout. However, if you then remove the enforcer, the treatment group will cooperate significantly less than the controls. Ostrom further describes a number of other experiments and field observations that point in the same direction. Whatever intrinsic motivation they had to cooperate gets durably "crowded out" by the enforced cooperation.

Operating a website or trying to run a group on a social platform happens under similar conditions of enforcement as that treatment group. At regular intervals, someone from the central bureau at one of the big platforms will give you a new rule about some arbitrary performance metric, how things get shared, what will now float to the top, or how ads work. If you're big enough, someone might listen to your concerns before ignoring them, but by and large you have zero say in these enforced rules that someone designed for your own good. Not all of the rules are bad; there's a small subset that could make sense with a few tweaks. But they all share the same "because we decided that's better for you" structure. My prediction based on the literature would be that, the more such forced-improvement interventions get rolled out, the less invested in the quality of their production people will be. We can expect compliance when it is rewarded, but we should also expect the intrinsic motivation to cooperate on a better internet to get crowded out. Again, at this scale and given how key the internet has become to our social dynamics, it is hard to imagine that this would not have toxic side-effects.

A final concern I will discuss here (I have more, but I have to stop writing somewhere), is the effect of building an internet economy on the back of defunding high-cost, quality content in order to subsidise a stream of low-cost, largely low-quality, often misinformation, conspiracy, or hate garbage. It is common to state that we have misinformation and radicalisation problems because a lot of that content drives engagement, engagement can be monetised with ads, and recommendation engines therefore drive towards such content at scale. There is plausibly some truth to that, but I don't believe it is the whole truth or even the most important part of the truth, because that's not how advertising works.

Advertising is valuable to its customers when it can drive a given performance metric, for instance people buying more of your stuff or forming a better opinion of your brand. The way such a metric is driven up is by having access to good predictors that someone will be interested in a given product or brand (eg. they are reading a comparison of coffee makers — you might be able to interest them in your new coffee brand). If what you know about someone is just that they watch a lot of antivax videos, there might be a few products you can target them with, but overall you can't predict that much about them that is of any use in advertising. Worse: the more someone's behaviour is driven by your recommendation engine, the less you learn about them. There's a built-in self-defeating factor in this business model in that the more you nudge people into a given behaviour, the less you learn about them (and therefore the less you can accrue new predictions about what they might be interested in buying).

This would seem to naturally limit anyone's ability to monetise garbage content at scale. Unless, that is, it is possible to observe people's behaviour in environments that are rich in their ability to produce advertising predictions (eg. a product comparison page, original reporting, travel writing) and then use that information to target them where it's cheap, next to some steaming garbage that someone else paid to make. Of course, it's still safer (and overall more effective) to advertise in the high-quality context, but it's a lot cheaper to do so in the low-quality one, and the production of low-quality content can be massively scaled up with relative ease. Sounds familiar? It should, because it's the world we live in. If you can sit in the middle, learning from quality contexts to subsidise garbage ones, you can make a lot of money on the value differential. Would such a system have negative effects? That's almost certain. It's easy to see how it would provide a highly effective way to carry out what Henry Farrell and Bruce Schneier call "common-knowledge attacks on democracy." But even in this case, we only have relatively early days research on how the loss of journalistic coverage is affecting democratic institutions, and we have at best only a relatively superficial understanding of revenue transfers in the data economy. We might want to find out — soon wouldn't be bad.

As we consider solutions, we should keep in mind that engineering is the discipline the least competent at the stewardship of complex systems. This is not a criticism: the whole point of engineering is to get rid of complexity. Whether you're crossing a bridge or relying on a crypto library to protect your data, it's generally considered a good thing to avoid the surprises that come with emergent behaviour. But complex systems need to remain complex — or they collapse. The large-scale engineering of ecosystems, cities, economies, or societies has a history of abject failure, as any reader of James C. Scott's Seeing Like A State will readily tell you. (These ideas are slowly coming to tech — for instance Maria Farrell introduced them through ISOC.) More engineering isn't the answer here, what we need is the kind of transdisciplinary approach that can establish a difference between scaling a system and just biggering it.

One approach that I think could contribute to a clearer understanding of our predicament is to analyse technology (a fancy word to say "way of doing something") using frameworks borrowed from the analysis of institutions (a fancy word to say "the way we do things in this area"). Collective applications of technology are institutions, and for instance Ostrom's work on what separates rules from norms (rules without an "or else" component) from shared strategies (norms without a may/must/must not "deontic" component) could illuminate the difference between technologies that increase craft, agency, and intrinsic motivation to cooperate (eg. the Unix philosophy) and technologies of control, that Ursula Franklin described as creating cultures of compliance.

I could ramble on for a while, but to my mind the point is clear: we need this crisis discipline, and we need it today.

Many thanks to Michael Nielsen for his feedback.